Proponents of AI-powered chatbots promise they are there to lighten the mental load for humans, so we put them to the test: How well could they handle the onslaught of typical farmer questions fielded by an Extension weed specialist or agent on a weekly basis?

We tested three of the most popular “corporate” large language models – ChatGPT, DeepSeek and Google Gemini – but also threw a smaller bot in the mix named ExtensionBot. Developed by the Extension Foundation, this non-profit bot aims to pull all its material from Cooperative Extension institutions. (Read more about our first review of it here).

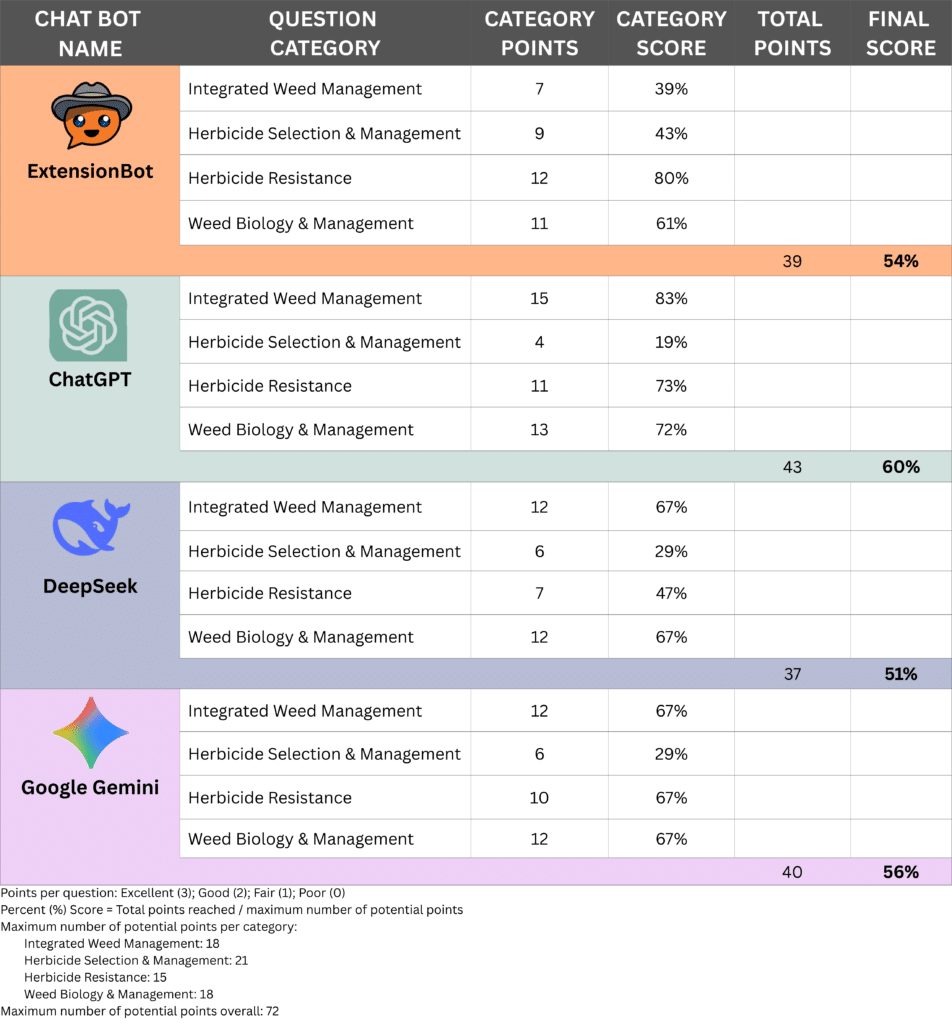

The four chatbots all performed fairly similarly, with the most highly used bot, ChatGPT, garnering the highest score. But hold your applause for this popular AI tool – its winning score was a mere 60%, or a D- by most school grading systems in the U.S.

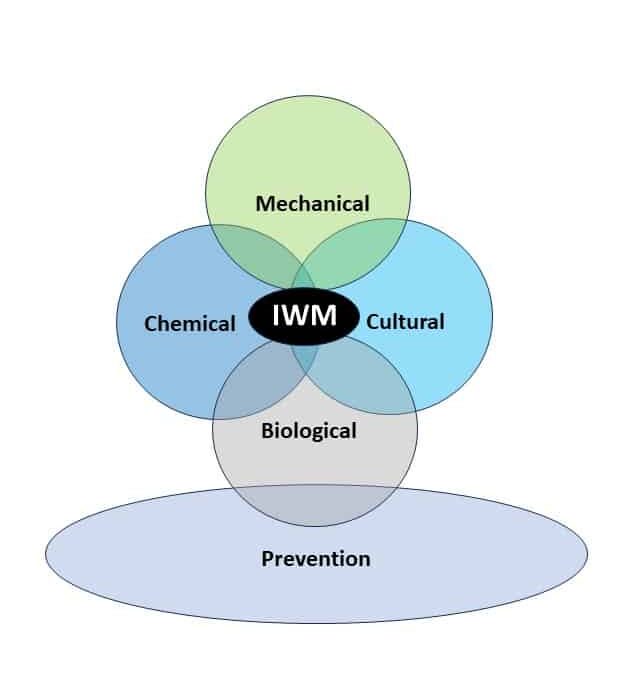

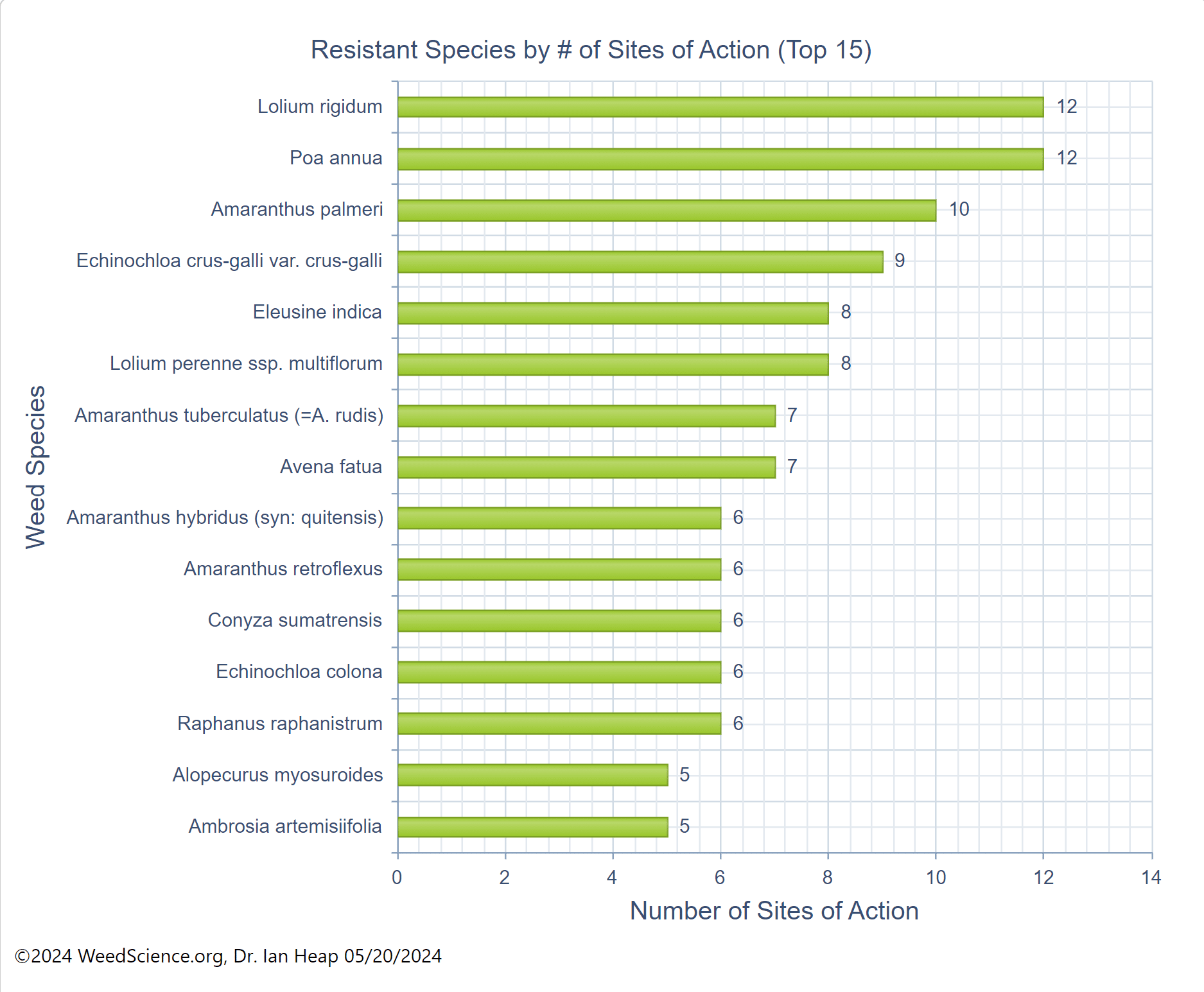

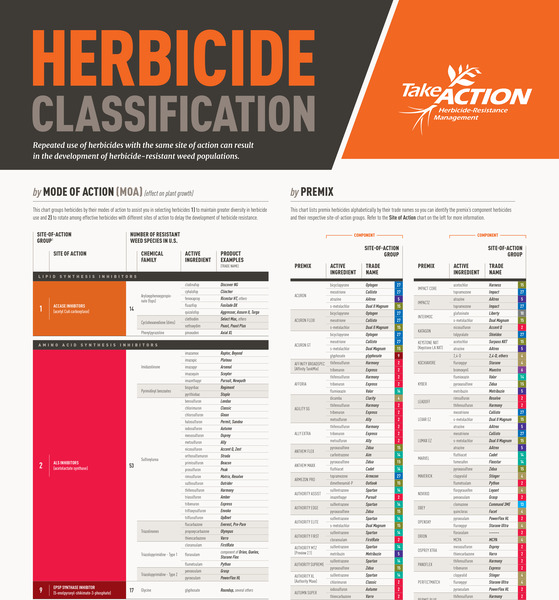

The other bots’ scores ranged from 51% to 56% when answering a series of 24 questions our testers threw at them, ranging in topic from integrated weed management to herbicide use, herbicide resistance and weed biology.

Our four testers, Drs. Bill Curran, Michael Flessner, Mark VanGessel and John Wallace, tried to model their questions realistically, writing some simple ones, mixed in with more complicated, multi-step questions and even the occasional misspelling or error. They rated the bots’ answers as either excellent (3 points), good (2), fair (1) or poor (0).

Most Bots Score Poorly on Transparency

While ExtensionBot’s 54% overall score landed it in third place, it swept the other bots away in an increasingly important category: transparency.

Only ExtensionBot consistently linked to the sources of its information, allowing our testers to learn more about a topic, as well as verify the bot’s accuracy. In contrast, ChatGPT and DeepSeek rarely provided any sources for the information they issued to the user, and Google Gemini provided sources only about half of the time.

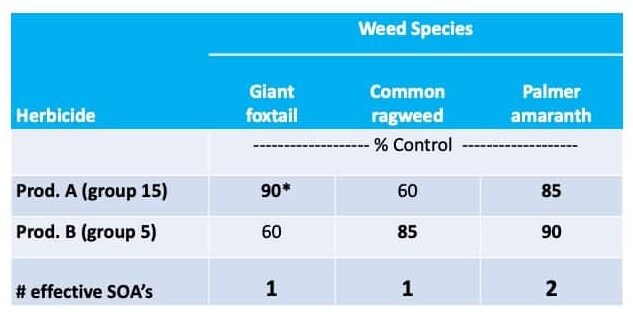

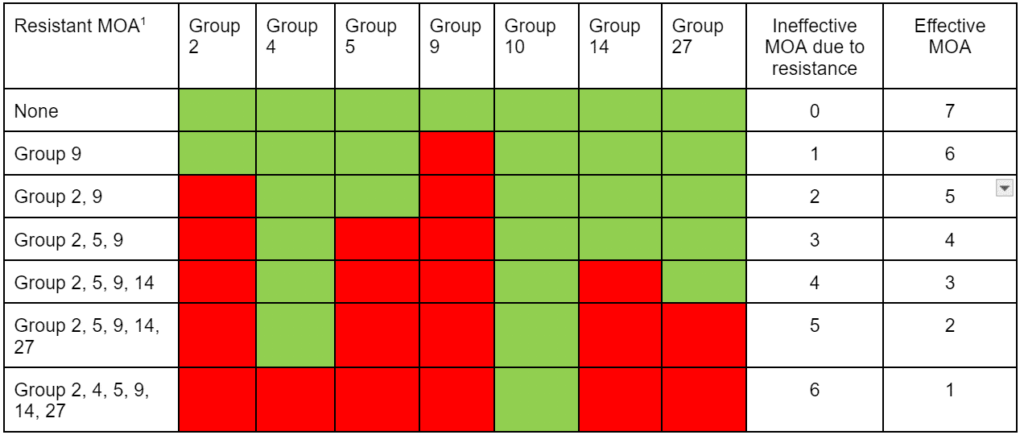

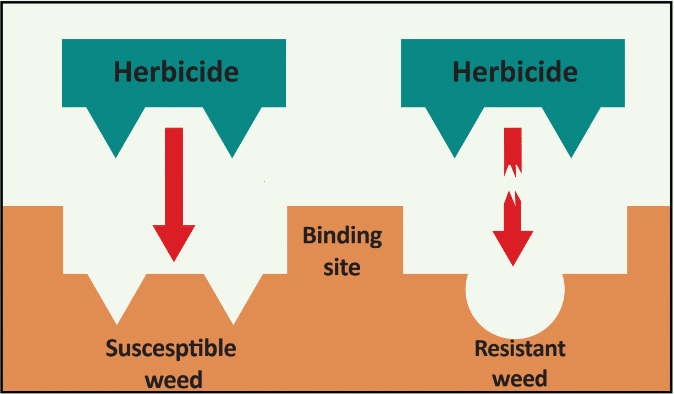

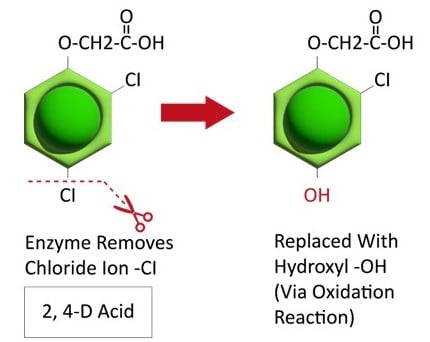

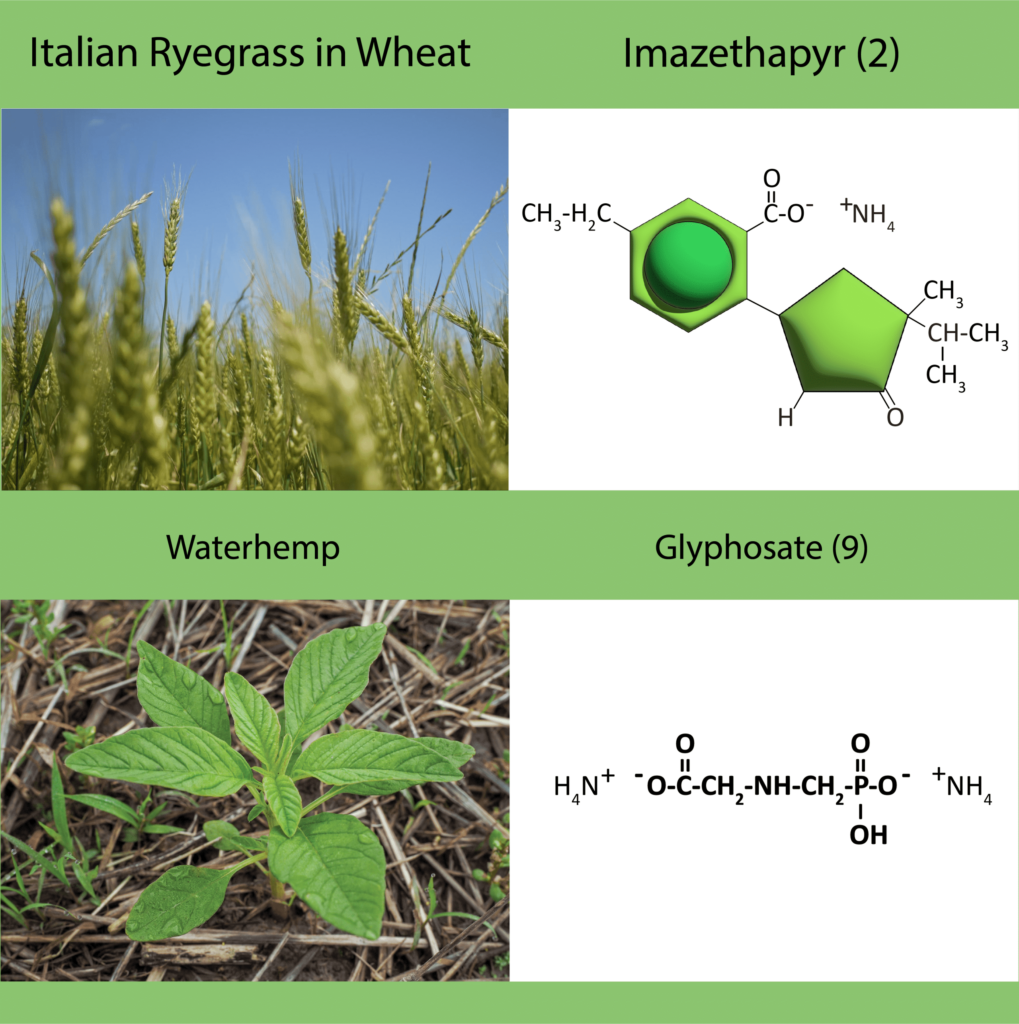

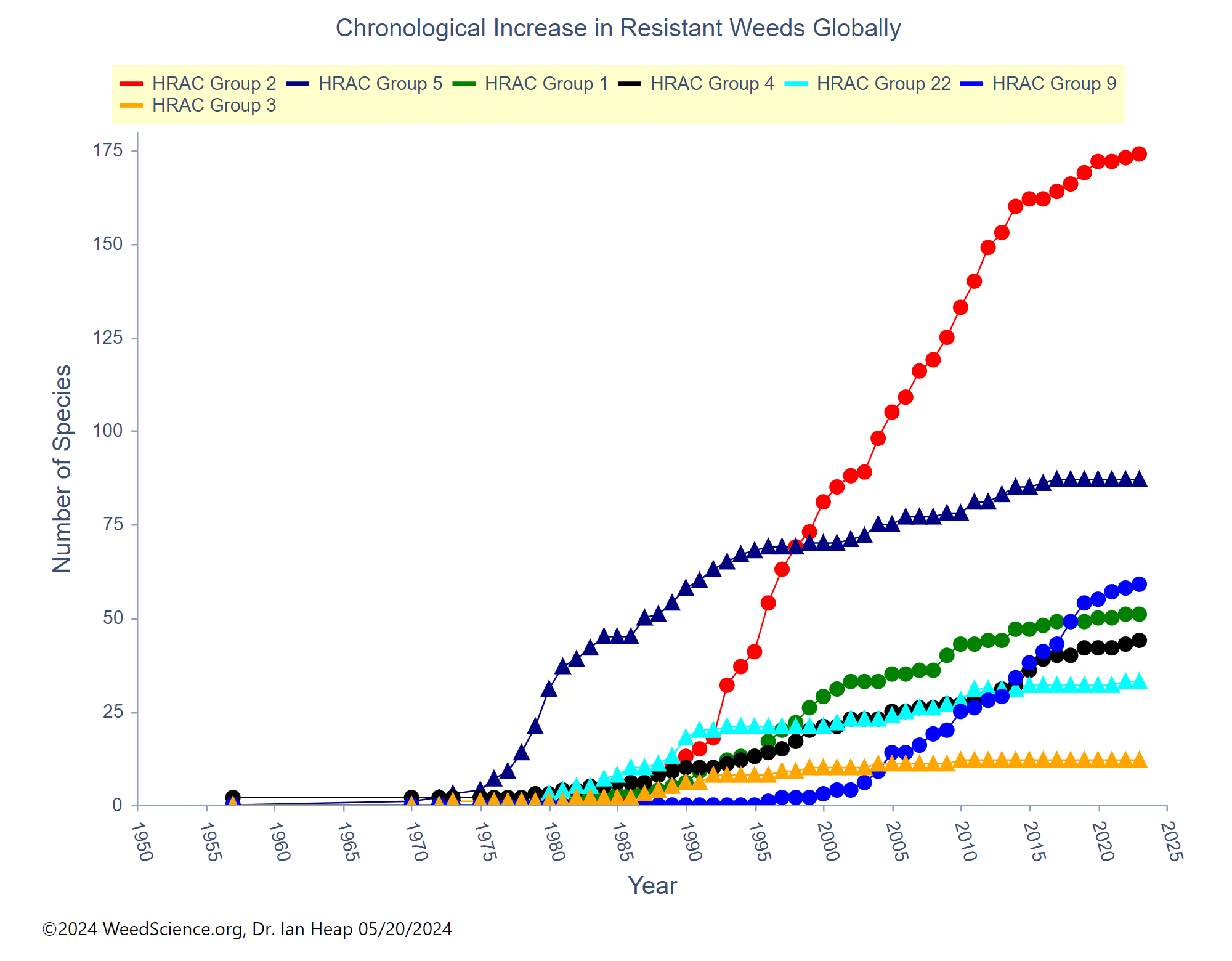

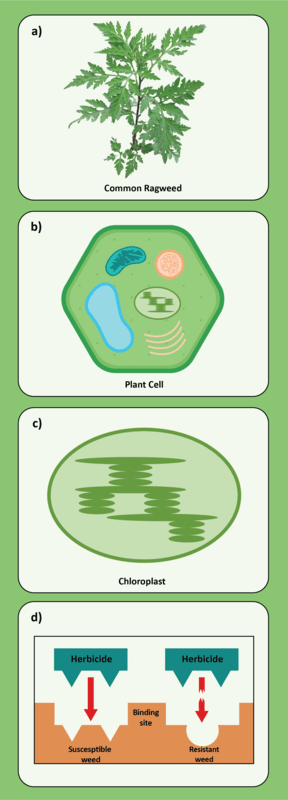

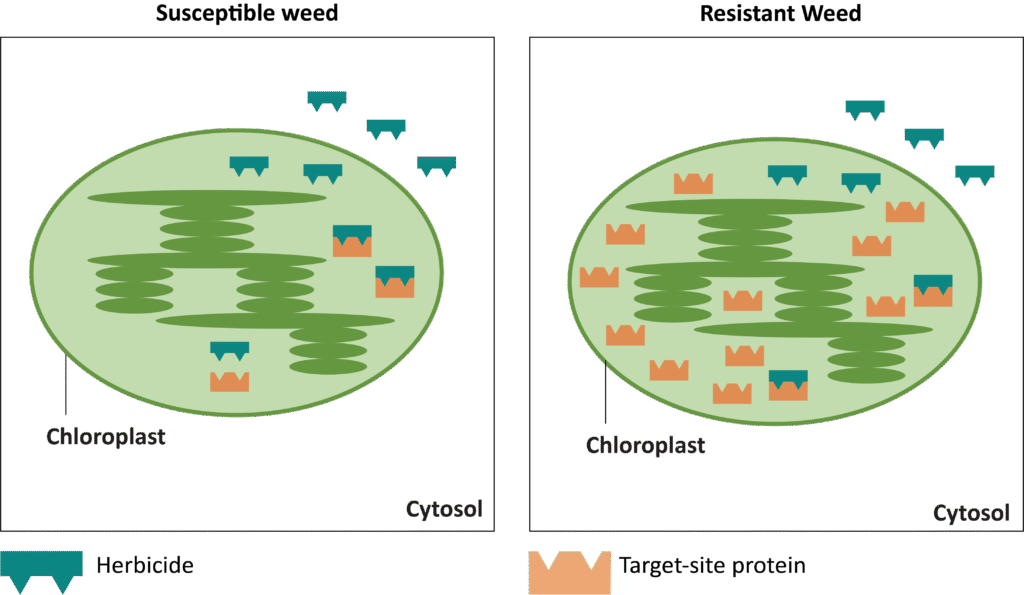

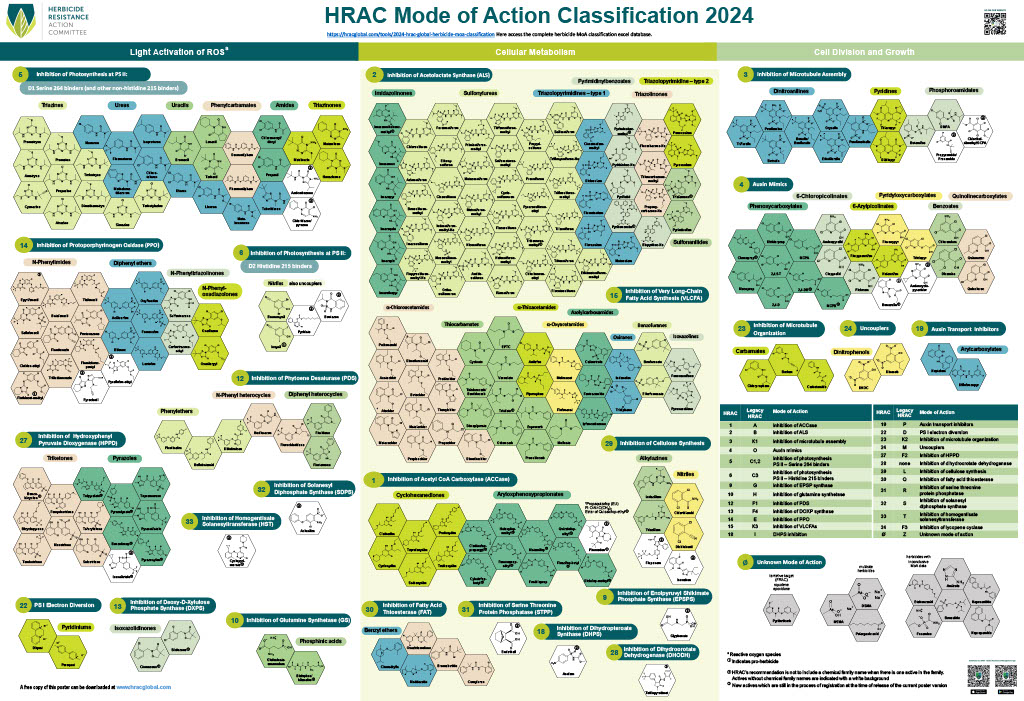

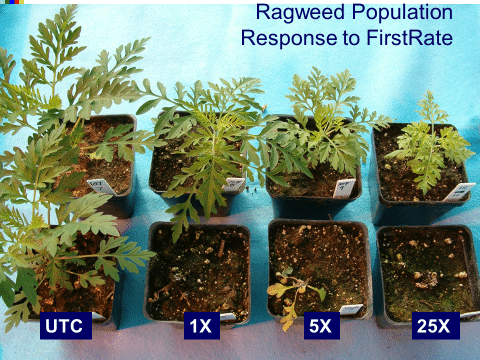

The lack of transparency from these popular AI tools is especially problematic given that they were sometimes wrong. For example, when Penn State scientist John Wallace asked DeepSeek if metribuzin would control atrazine-resistant pigweeds, the AI bot confidently informed him that it would because metribuzin is a “key alternative mode of action.”

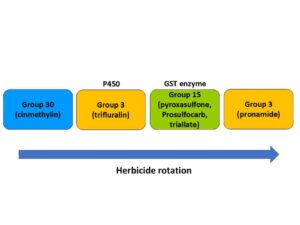

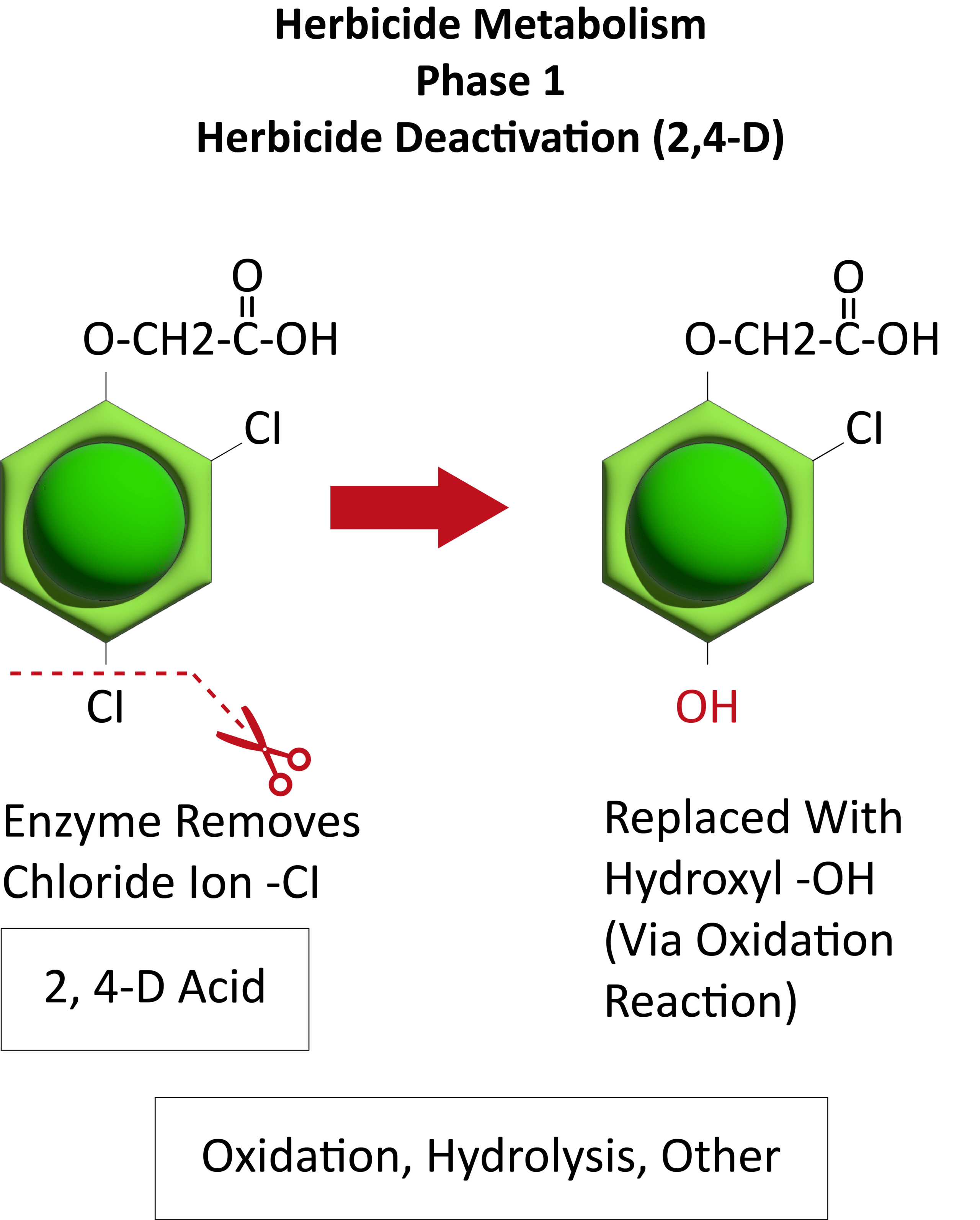

But the real answer is different and far more nuanced, Wallace explains. Metribuzin and atrazine are both Group 5 Photosystem II herbicides, and cross resistance can occur – depending on the mechanism of atrazine-resistance. With no sources to back up or refute its claim, the DeepSeek model not only potentially misinformed its user in this case, but did so with a near-permanent effect.

In another case, Google Gemini informed Wallace that herbicide resistance is only responsible for one of out every 10 herbicide application failures, an elusive statistic for which the bot provided no source. “I’m left wondering where they came up with that number,” Wallace says.

Herbicide Labels Stump AI

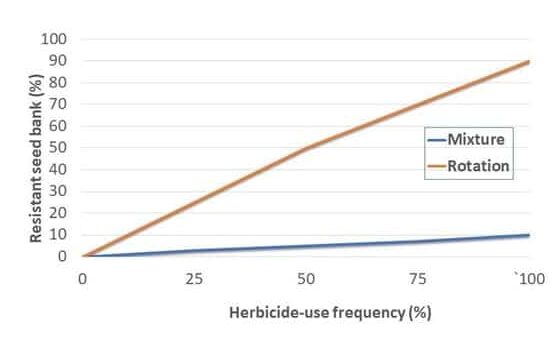

ExtensionBot also held its own against its corporate competitors in answers on herbicide resistance, but was outpaced by them on questions about integrated weed management and weed biology. ChatGPT did particularly well on questions of integrated weed management, noted tester Bill Curran, though it sometimes generated incorrect answers a couple times before generating an accurate one, and again, never provided any sources.

The Extension-driven bot did twice as well on questions about individual herbicide management – a category that the other bots performed very poorly on.

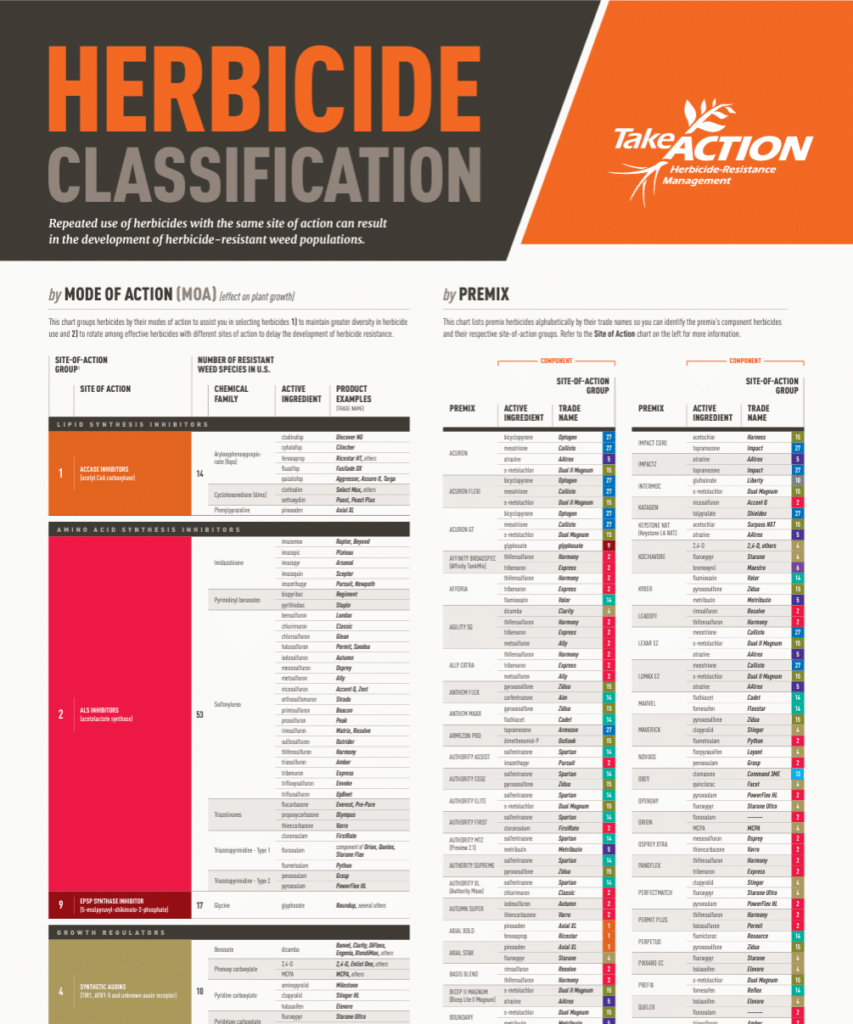

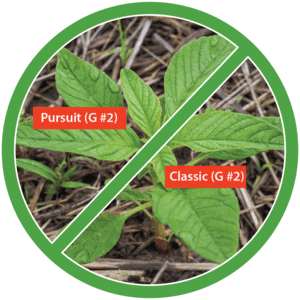

Overall, reading herbicide labels accurately and comprehensively was a major pain point for all the bots, whose scores ranged from 19% to 33% on that topic, with ExtensionBot topping the group at 43%. The bots didn’t necessarily answer incorrectly, but they nearly always failed to read completely and misinterpreted the sometimes-contradictory language of lengthy herbicide labels.

For example, when asked if clethodim can be used for grass control in alfalfa, all the bots answered yes, but then each failed to address important nuances, such as not mentioning that some grass species are NOT susceptible, or not alerting users to tank mix antagonism risks and never directing users to read the label. DeepSeek almost hit the mark on this one, but its answer went astray when the bot made an odd pivot to another topic. “It talked about autotoxicity of alfalfa, which has nothing to do with the question that was asked,” recalls Dr. VanGessel.

Another hurdle for the chatbots’ approach to label advice? Outdated materials drifting around the web. “Many versions of labels exist on the internet, likely contributing to the bots’ errors,” Flessner explains. “But only one label – the one on the jug – matters.”

Bots Navigate Vast Information Landscapes

The difference in responses from ExtensionBot and its larger competitors proved an interesting study in the value of limited versus abundant sources, the testers noted.

Sometimes, ChatGPT, DeepSeek and Gemini’s ability to sweep across the vast internet landscape paid off. For example, ChatGPT’s response to a question on using flaming as a tool for weeds delved deep into many factors, from weed seed biology to economics, tester Bill Curran found, though he couldn’t see its sources. In contrast, ExtensionBot, which pulled from only a few sources, provided an accurate, but shallower analysis of flaming.

Maybe consider AI bots only a first step in seeking out help on agricultural questions, particularly nuanced ones and definitely legal ones involving labels, the scientist testers concluded.

Other times, ExtensionBot’s ability to avoid the clutter of the worldwide web proved helpful.

When asked how to determine if a weed’s survival is due to herbicide resistance, the corporate AI bots often pulled in strange, out-of-place details (such as Gemini’s unsourced 1-in-10 statistic). But ExtensionBot, which pulled its information from just a small handful of Take Action and state Extension factsheets, nailed the question, earning a “very good and succinct” remark from its tester.

Wrong Today, Right Tomorrow?

Perhaps the most curious conclusion about the large language model chatbots is that any review of them – including this one – is only accurate for a moment in time.

As tester Curran noted, the bots often generated different answers upon a second or third query. If identical questions were fed into the same bots today, the results would likely look different.

What does that mean for their value as crop advisors to the agricultural industry?

Maybe consider AI bots only a first step in seeking out help on agricultural questions, particularly nuanced ones and definitely legal ones involving labels, the scientist testers concluded.

Learn more about Extensionbot and the Extension Foundation here.

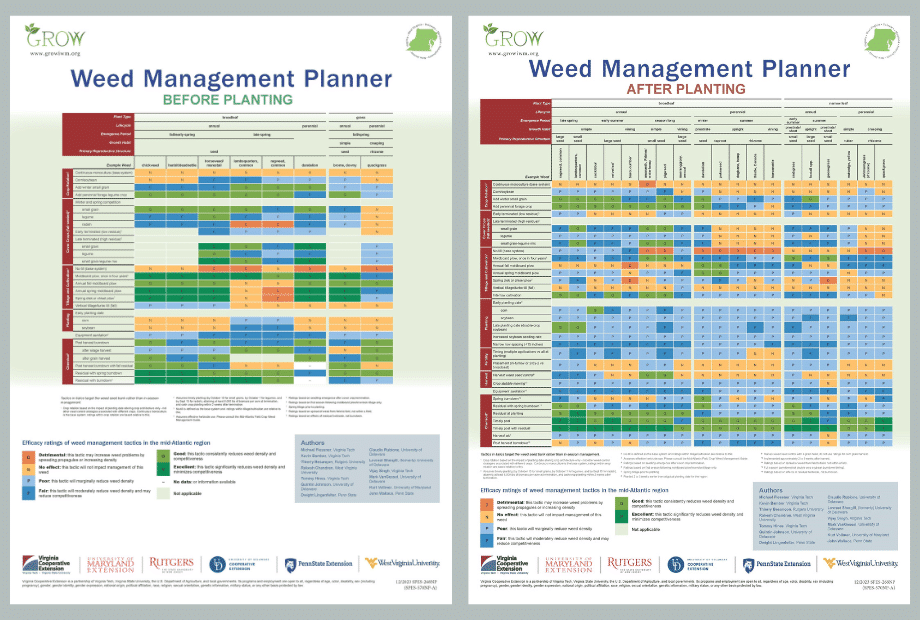

Learn more about GROW and its weed management resources here.

Text and photo graphics by Emily Unglesbee, GROW; header image by USB